What is Artificial Intelligence? (AI)

2nd January 2020

HOW ANALYTICS IS CHANGING THE ECOMMERCE INDUSTRY

7th January 2020Apache Spark has become one of the key cluster-computing frameworks in the world. Spark can be deployed in numerous ways like in Machine Learning, streaming data, and graph processing. Spark supports programming languages like Python, Scala, Java, and R. In this section, we will understand what Apache Spark is.

Apache Hadoop and Apache Spark

One of the biggest challenges with respect to Big Data is analyzing the data. There are multiple solutions available to do this. The most popular one is Apache Hadoop.

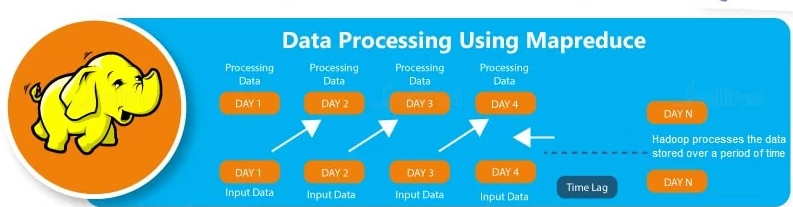

Apache Hadoop is an open-source framework written in Java that allows us to store and process Big Data in a distributed environment, across various clusters of computers using simple programming constructs. To do this, Hadoop uses an algorithm called MapReduce, which divides the task into small parts and assigns them to a set of computers. Hadoop also has its own file system, Hadoop Distributed File System (HDFS), which is based on Google File System (GFS). HDFS is designed to run on low-cost hardware.

Apache Spark is an open-source distributed cluster-computing framework. Spark is a data processing engine developed to provide faster and easy-to-use analytics than Hadoop MapReduce. Before Apache Software Foundation took possession of Spark, it was under the control of University of California, Berkeley’s AMP Lab.

Hadoop Vs. Spark

Although it is known that Hadoop is the most powerful tool of Big Data, there are various drawbacks for Hadoop. Some of them are:

- Low Processing Speed: In Hadoop, the MapReduce algorithm, which is a parallel and distributed algorithm, processes really large datasets. These are the tasks need to be performed here:

- Map: Map takes some amount of data as input and converts it into another set of data, which again is divided into key/value pairs.

- Reduce: The output of the Map task is fed into Reduce as input. In the Reduce task, as the name suggests, those key/value pairs are combined into a smaller set of tuples. The Reduce task is always done after Mapping.

- Batch Processing: Hadoop deploys batch processing, which is collecting data and then processing it in bulk later. Although batch processing is efficient for processing high volumes of data, it does not process streamed data. Because of this, the performance is lower.

- No Data Pipelining: Hadoop does not support data pipelining (i.e., a sequence of stages where the previous stage’s output ID is the next stage’s input).

- Not Easy to Use: MapReduce developers need to write their own code for each and every operation, which makes it really difficult to work with. And also, MapReduce has no interactive mode.

- Latency: In Hadoop, the MapReduce framework is slower, since it supports different formats, structures, and huge volumes of data.

- Lengthy Line of Code: Since Hadoop is written in Java, the code is lengthy. And, this takes more time to execute the program.

Having outlined all these drawbacks of Hadoop, it is clear that there was a scope for improvement, which is why Spark was introduced.

How Spark Is Better than Hadoop?

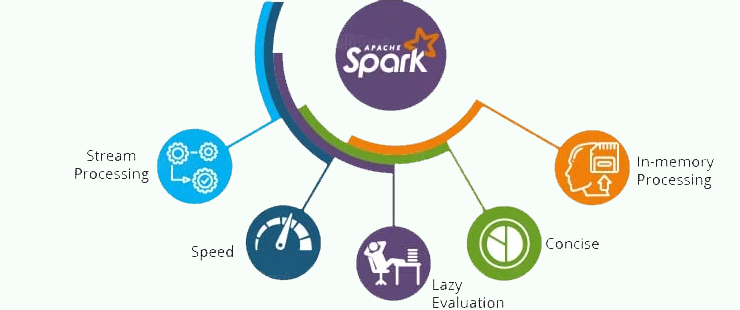

- In-memory Processing: In-memory processing is faster when compared to Hadoop, as there is no time spent in moving data/processes in and out of the disk. Spark is 100 times faster than MapReduce as everything is done here in memory.

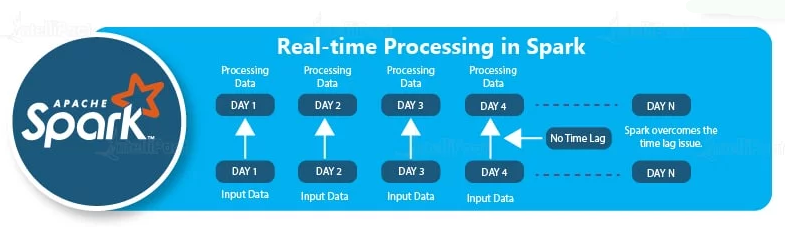

- Stream Processing: Apache Spark supports stream processing, which involves continuous input and output of data. Stream processing is also called real-time processing.

- Less Latency: Apache Spark is relatively faster than Hadoop, since it caches most of the input data in memory by the Resilient Distributed Dataset (RDD). RDD manages distributed processing of data and the transformation of that data. This is where Spark does most of the operations such as transformation and managing the data. Each dataset in an RDD is partitioned into logical portions, which can then be computed on different nodes of a cluster.

- Lazy Evaluation: Apache Spark starts evaluating only when it is absolutely needed. This plays an important role in contributing to its speed.

- Less Lines of Code: Although Spark is written in both Scala and Java, the implementation is in Scala, so the number of lines are relatively lesser in Spark when compared to Hadoop.

Components of Spark

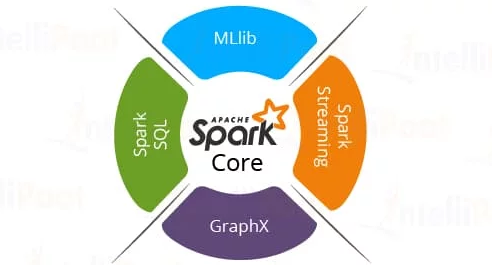

Spark as a whole consists of various libraries, APIs, databases, etc. The main components of Apache Spark are as follows:

- Spark Core

Spare Core is the basic building block of Spark, which includes all components for job scheduling, performing various memory operations, fault tolerance, and more. Spark Core is also home to the API that consists of RDD. Moreover, Spark Core provides APIs for building and manipulating data in RDD.

- Spark SQL

Apache Spark works with the unstructured data using its ‘go to’ tool, Spark SQL. Spark SQL allows querying data via SQL, as well as via Apache Hive’s form of SQL called Hive Query Language (HQL). It also supports data from various sources like parse tables, log files, JSON, etc. Spark SQL allows programmers to combine SQL queries with programmable changes or manipulations supported by RDD in Python, Java, Scala, and R.

- Spark Streaming

Spark Streaming processes live streams of data. Data generated by various sources is processed at the very instant by Spark Streaming. Examples of this data include log files, messages containing status updates posted by users, etc.

- GraphX

GraphX is Apache Spark’s library for enhancing graphs and enabling graph-parallel computation. Apache Spark includes a number of graph algorithms which help users in simplifying graph analytics.

- MLlib

Apache Spark comes up with a library containing common Machine Learning (ML) services called MLlib. It provides various types of ML algorithms including regression, clustering, and classification, which can perform various operations on data to get meaningful insights out of it.